Parameterized Analysis in Artificial Intelligence (Research Project)

- Funding organization: The Austrian Science Funds, FWF

- Project number: Y 1329 START-Programm (ParAI)

- Grant DOI: 10.55776/Y1329

Project Team

Picture credits: Soeren Nickel, 2020

Research Statement

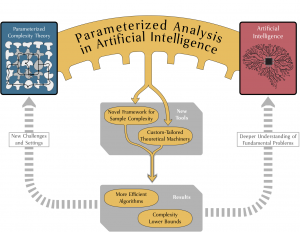

Parameterized complexity theory is a well-established paradigm used for the fine-grained analysis of computational problems. Such analysis can provide efficient algorithms for these problems by exploiting subtle structural properties of relevant inputs, as well as powerful lower bounds that rule out efficient algorithms even for severely restricted instances. Parameterized complexity analysis has found great success across numerous fields of computer science, with notable examples including graph algorithms, computational geometry, database theory, computational logic and constraint satisfaction. In the highly prominent fields of artificial intelligence (AI) and machine learning (ML) – areas which have become an ubiquitous part of today's society – we see a distinct lack of foundational research targeting the fine-grained, parameterized complexity of fundamental problems. The goal of this six-year project is to change this.

A Parameterized Toolbox for Problems in AI and ML

One main objective of this project is the development of new innovative tools and machinery that allows us to apply the parameterized complexity framework in this setting. Indeed, most of the existing tools developed in parameterized complexity theory are designed to work in the setting of discrete problems on graphs. On the other hand, many problems of interest in AI and ML do not admit straightforward graph representations and/or contain non-discrete components. The development of the required tools will then go hand in hand with obtaining new algorithms and matching lower bounds for the studied problems.